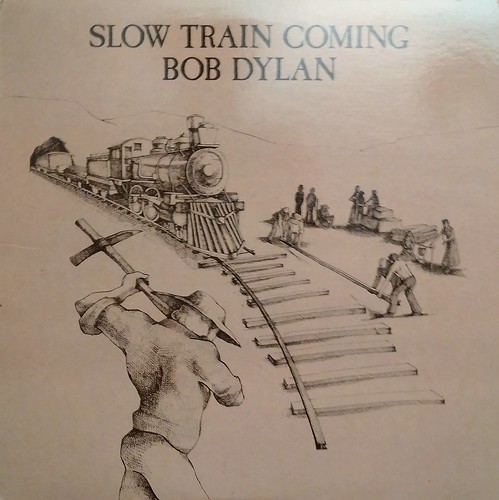

“Slow Train Coming”, the artwork from the cover of Bob Dylan’s album. Photo by Logos: the Art of Photography on Flickr.

The cover of Bob Dylan’s album “Slow Train Coming” shows people literally laying a railway just ahead of a train which is, in theory, a-comin’. Just very slowly. The European Commission’s antitrust decision against Google is just such a train. A €2.42bn train. Big, but deathly slow.

(If you need any background about the EC and Google and why this all matters, I wrote about it in 2015. Slow train.)

TL;DR:

• Google has been squashing rival shopping sites since mid-2006;

• the EC was alerted in summer 2009 after many efforts by sites to get responses from Google failed;

• do we seriously think Google’s going to change its behaviour?

• why isn’t Foundem getting a slice of the fine?

• antitrust moves too slowly in the modern era

The European Commission’s fine of €2.42bn on Google has been just like that train: a damn long time coming. The original complainant, the “vertical search” site Foundem, first noticed something funny happening to its position in search results back in 2006: it was being penalised for no apparent reason.

The penalty (search) box

Foundem was the brainchild of Shivaun and Adam Raff (it really is like their child, and they are brainy; I’ve met them on several occasions as this antitrust case has inched its way through the system). By this time the site was only six months old, focussed on what it saw as a gap – or at least growing niche – in the market: “vertical search”, comparing one specific product, rather than “horizontal search” as practised by Google and Bing (and many also-rans). You can probably think of other “vertical search” sites: Kelkoo was very big at one point. There’s also one called Amazon, though at that time it did a lot of the fulfilment as well; Foundem would find results from other shopping sites, so that it was like a meta-search engine. Amazon, at the time, wasn’t, though as it has become more of a marketplace rather than a fulfilment company that description is increasingly accurate.

But for Foundem in June 2006, this was remote. It had been hit by an algorithmic search penalty which hit lots of vertical search companies. It filed “reconsideration requests” to Google, which it says the company ignored.

(See the timeline for yourself at Foundem’s site.)

In August 2006 it was hit by an “AdWords Penalty”: this suggested that “landing pages” people arrived at were such low quality that it would have to pay much more to be able to buy an AdWord (Google advertising position). How much more? It was raised, they say, from about 5p/click to £5/click.

It’s summer 2006, and as the Raffs put it in their timeline, “Foundem was excluded from Google’s natural and paid search results, both of which are essential channels to market for any internet-based business.” That would be near enough a death penalty for any consumer-facing business; fortunately they found other outlets, such as powering shopping searches on magazine websites for IPC, Bauer and others.

The Raffs kept lobbying Google for reconsideration, and kept being brushed off; meanwhile Google launched Universal Search (integrating Google Maps and Google News and YouTube results into a box at the top which favoured Google products and pushed rival services further down the search rankings).

In December 2008 a TV show named Foundem the UK’s best price comparison site. Google meanwhile didn’t relent on its penalty against Foundem’s position in search results.

Finally, in July 2009 Foundem had its first meeting with the EC’s DGComp – the arm of the European Commission which investigates antitrust cases.

Eight years and more of hurt

That’s almost exactly eight years ago. It’s taken absolutely ages for the EC to act on this, giving Google plenty of time to tighten its grip on the business, and even for the whole search landscape to shift – from one where the desktop has primacy to one where many searches begin on mobile, inside apps.

There’s lots of applause today from Europeans about the fact that Margethe Vestager didn’t give up on this case, and that a record fine has been imposed (and that if Google doesn’t alter its behaviour in 90 days, the daily fine will be eye-watering). “Better later than never, but seven years have been still an eternity for some market players, in particular European SMEs [small and medium enterprises],” to quote the MEPs Ramon Tremosa I Balcells and Andreas Schwab.

There’s the usual eye-rolling from a number of American observers, who say “which AMERICAN company will be next?”, and ignore the fact that the Federal Trade Commission’s investigation in 2011/12 discovered that Google’s own user testing found that people preferred seeing other vertical search engine results in the organic search results; and also ignore the fact that DGComp fines all sorts of European companies for antitrust and cartel actions of all flavours. (The decision before Google was fining three car lighting system producers over cartel behaviour.)

Also, for those eye-rolling American (and other) readers: European antitrust doctrine differs in one very significant way from the American flavour. In the US, if you use a monopoly in one space to take over another but consumers benefit overall, there’s no case to answer. This was why the FTC dropped its case (on a 4-0-1, ie one abstention, no opposition) decision. Scroll down in that FTC release to “search bias” where it says the introduction of Universal Search “could be plausibly justified as innovations that improved Google’s product and the experience of its users.” A bit milquetoast, that recommendation.

In the EU, however, the question is whether antitrust stifles competition, not what happens to consumers. This refusal to consider “consumer surplus” infuriates and astonishes a significant number of American observers, but it’s how it’s done here.

But, but, but. I very much expect that Google will appeal this before the 90-day deadline, and that this will mean it doesn’t yet have to change its behaviour, nor pay the fine. Do you think that this might be a long-drawn-out process which will grind interminably through the courts, during which Google won’t change how it displays results? I do.

Meanwhile Foundem and all the other vertical search companies which the EC is ostensibly protecting have been almost crushed. If there were any justice, they’d be getting a slice of the fine. After all, companies which report cartel action either get some payment, or (if they’re part of the cartel) let off some of the fine.

Slow train, now arriving

This is the reality of antitrust: in technology especially, the dominance of these companies and the power of their networks means that the decision comes too late to help those who were originally affected. It was certainly the case with Microsoft and Netscape; it’s clearly the case here. Who knows how big Foundem and Kelkoo and all the others might have been if Google hadn’t been able to use its dominance in straight search to annexe the vertical search space?

Some would really like the fine to have teeth. Tremosa i Ballcells commented: “When it comes down to the fine, I always said: first, you pay the fine and, then, you restore competition and the level playing field like it was the case with Microsoft. I believe that the fine should be retroactive for each year since the beginning of the wrongdoing by Google. This fine is far from the theoretical fine of 10% of Google annual revenues. The fine should be multiplied by the number of years since the start of the damage to competitors. Moreover, the behaviour of Google since the SO [Statement of Objections] and from today should be taken into account as well. Time helps monopolies, not SMEs.”

The argument of course is that antitrust actions serve to make the dominant company change its future behaviour: a fine of that size, and the threat of continuing fines, and particularly the tedious legality of it all, burdens the company’s decision-making process so that its executives all act as though someone suggested they play on the electrified railway when the idea of moving into “adjacent” business comes up. (It certainly worked with Microsoft.)

This will be the real acid test of the EC’s action: will it make Google’s internal culture change? We won’t know the answer to that for some time. Slow train coming.