Margrethe Vestager, the Danish-born EC competition commissioner. Photo by Radikal Venstre on Flickr.

“Google doesn’t have any friends,” I was told by someone who has watched the search engine’s tussle with the US Federal Trade Commission and latterly with the European Commission. “It makes enemies all over the place. Look how nobody is standing up for it in this fight. It’s on its own.”

The release, apparently accidentally, of the FTC staff’s report on whether to sue Google over antitrust in 2012 to the Wall Street Journal has highlighted just how true that is. We only got every other page of one of two reports. But that gives us a lot to chew on as the EC prepares a Statement of Objections against Google that will force some sort of settlement. (It’s obvious that the EC is going for an SOO: three previous attempts to settle without one foundered, and the new competition commissioner Margrethe Vestager clearly isn’t going to go down the same road into the teeth of political disapproval.)

The Wall Street Journal has published the FTC staffers’ internal report to the commissioners. And guess what? It shows them outlining many ways in which Google was behaving anticompetitively.

The FTC report says Google

• demoted rivals for vertical business (such as Shopping) in its search engine results pages (SERPS), and promoted its own businesses above those rivals, even when its own offered worse options

• scraped content such as Amazon rankings in order to populate its own rankings for competing services

• scraped content from sites such as Yelp, and when they complained, threatened to remove them from search listings

• crucially, acted in a way that (the report says) resulted “in real harm to consumers and to innovation in the online search and advertising markets. Google has strengthened its monopolies over search and search advertising through anticompetitive means, and has forestalled competitors and would-be competitors’ ability to challenge those monopolies, and this will have lasting negative effects on consumer welfare.”

• among the companies that complained to the FTC, confidentially, were Amazon, eBay, Yelp, Shopzilla and more. Amazon and eBay stand out, because they’re two of Google’s biggest advertisers – yet there they are, saying they don’t like its tactics.

Now the WSJ has published what it got from the FTC: every other page of the report prepared by the staff looking at what happened, with some amazing stories. It’s worth a read. Particularly worth looking at is “footnote 154”, which is on p132 of the physical report, p71 of the electronic one on the WSJ. This is where it shows how Google put its thumb on the scale when it came to competing with rival vertical sites.

What does Google want, though?

Before you do that, though, bear in mind the prism through which you have to understand Google’s actions.

Google’s key business model is to offer search across the internet, and sell ads against peoples’ searches for information (AdWords) or reading on sites where it controls the ads (AdSense).

For that business model to work at maximum efficiency, Google needs

• to be able to offer the “best” search results, as perceived by users (though it’s willing to sacrifice this – see later – and you could ask whether the majority of users will notice)

• to have the maximum possible access to information across the internet to populate search results. Note that this is why it’s in Google’s interests to make cost barriers to information to be pushed to zero, even if that isn’t in the interests of the people or organisations that initially gather and actually own and collate the information; it’s also in Google’s interests to ignore copyright for as long and as far as possible until forced to comply, because that means it can use datasets of dubious legality to improve search results

• to capture as much search advertising as it can

• to capture as much online display advertising as it can

None of those is “evil” in itself. But equally, none is fairies and kittens. It’s rapacious; the image in Dave Eggers’s The Circle (a parable about Google), of a transparent shark that swallows everything it can and turns it into silt, is apt.

YouTube (which it has owned since 2005) is an interesting supporting example here. It’s in Google’s interests for there to be as much material as possible on it, regardless of copyright, so that it can show display adverts (those irritating pre-rolls). It’s in its interests for videos to follow endlessly unless you stop them (an “innovation” it has recently introduced, and from which you have to opt out).

It’s also in its interests for YouTube to rank as highly as possible in search results even if it isn’t the optimum, original or most-linked source of a video, because that way Google captures the advertising around that content, rather than any content owner capturing value (from rental or sale or associated advertising).

It’s also in its interests to do only as much as it absolutely has to in order to remove copyrighted content – and even then, it will often suggest to the copyright owner instead that they just overlook the copyright infringement, and monetise it instead in an ad revenue split. Where of course Google gets to decide the split. (Example: film studios, and all the pirated content from their productions; record labels, and all the uploaded content there, which is monetised through ContentID. Pause for a moment and think about this: you and I wouldn’t have a hope of making money from content other people had uploaded without permission to our website. And particularly not to be able to decide the revenue split from any such monetisation. That Google can and does with YouTube shows its market power – and also the weakness of the law in this space. The record labels couldn’t get a preemptive injunction; so they were left with a fait accompli.)

Think vertical

In building associated businesses (aka “vertical search” – so-called because they’re specific to a field) – such as Google Shopping (where listing was at first free, but then became paid-for just like AdWords), or Google Flight Search (where Google could benefit from being top), or Google Product Search, the FTC report confirmed what everyone had said repeatedly: Google pushed its own product above rivals, even when its own were worse, and even at its own expense.

The FTC report is instructive here. It cites a number of examples where Google either forced other sites to give it content, or took that content (even when the other sites didn’t want it to), or sacrificed search quality in order to push its own vertical products.

Forcing sites to give it content? In building Google Local, Google copied content from Yelp and many other local websites. When they protested – Yelp cut off its data feed to Google – Google tried for a bit, and then came up with a masterplan: it set up Google Places and told local websites that they had to allow it to scrape their content and allow it there, or it would exclude them altogether from web search. Ta-da! There were all the reviews that Google needed to populate Google Local, provided by its putative rivals for free, despite all the effort and cost it had taken them to gather them.

Classic Google: access other peoples’ content for free; ignore the consequential benefits. For Google, it isn’t important whether those local websites survive or not, because it has their data. For a company like Yelp, which relies on people coming to its site and using it, and inputting data, and makes its money from local ads and brand ads, any move by Google to annex its content is a serious threat.

This also points to Google’s dominance. Sites like Shopzilla, the FTC noted, were scared to deny Google the free rein to its data because they worried that people wouldn’t find them.

That’s arm-twisting of the first order.

Google was definitely worried about verticals taking away from its core business: in 2005 Bill Brougher, a Google product manager, said in an internal email that “the real threat” of Google not “executing on verticals” (ie having its own offerings) was

“(a) loss of traffic from Google.com because folks search elsewhere for some queries (b) related revenue loss for high spend verticals like travel (c) missing opportunity if someone else creates the platform to build verticals (d) if one of our big competitors builds a constellation of high quality verticals, we are hurt badly”.

You’ve got questions

Obviously, you’ll be going “but..”:

1) But aren’t “verticals” just another form of search? No – though they need search to be visible. A retailer of any sort is a “vertical”: a shop needs to know what it has to sell in order to offer it for sale. But populating the shop, tying up deals with wholesalers, figuring out pricing – those aren’t “search”. Amazon is a “vertical”; Moneysupermarket is a “vertical” (where it sells various deals, and wraps it with information in its forums). Hotel booking sites, shopping sites, they’re all “verticals”.

Their problem is that they need what they’re offering (“hotel tonight in Wolverhampton”) to be visible via general search, but they don’t want that to be something that can be scraped easily.

Amazon, for example, gave Google limited access to its raw feed; but Google wanted more, including star ratings and sales rankings. Amazon didn’t want to give that up for bulk use (though it was happy for it to be visible individually, when users called a page up). Google simply scraped the Amazon data, page by page – and used the rankings to populate its own shopping services. It did the same with Yelp – which eventually complained and sent a formal cease-and-desist notice.

In passing, this is a classic example of Google having it both ways: if your dataset is big enough, as with Amazon’s, then Google – and its supporters – can claim that scooping up of extra data such as shopping rankings and star ratings is “fair use”; if your dataset is small, then you’re probably small too, and will be threatened by the possibility of exclusion if you refuse to yield it up – witness Shopzilla, above.

(Side note: Microsoft wasn’t above doing something similar when it was dominant. Just read about the Stac compression case: Microsoft got a deep look at a third-party technology that effectively doubled your storage space in the bad old days of MS-DOS; then it took the idea and used rolled it into MS-DOS for free, rather than licensing it. Monopolists act in very similar ways.)

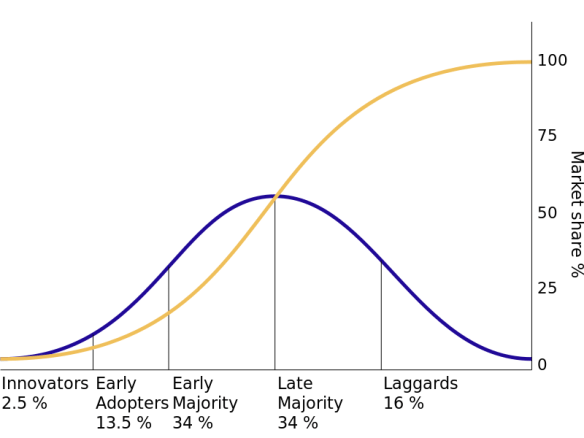

2) But rival search sites are “just a click away”. You don’t have to use Google. The FTC acknowledges this point, which is one that Eric Schmidt and Google have made often. There’s a true/not true element to this. The search engine business effectively collapsed after the dot-com boom in 2000: Alta Vista, which was then the biggest (in revenue and staffing terms) lost all its display ads. And Google did the job better. That’s undeniable. But for at least five crucial years, it had pretty much zero competition. Microsoft was in disarray, and Google was able to attract both search data and advertisers to corner the market.

What’s more, it was the default for search on Firefox and Safari, which helped propel its use. The combination of “better, unrivalled and default” made it a monopoly. Most people don’t even know there’s an alternative, and couldn’t find one if asked. Just listen how many times in everyday conversation – on the radio, in the street, in newspapers – you hear “google” used as a verb.

One thought on that “just a click away” – Google has poured huge amounts of money into making sure that people aren’t presented with any other search engine to begin with. The Mozilla organisation’s biggest source of funds for years has been Google, paying to be its default search (until last autumn, when Yahoo paid for the US default and Google, I understand, didn’t enter a bid – because Google Chrome is now bigger than Firefox). Google pays Apple billions every year to be the default search on Safari on the Mac, iPhone and iPad.

Clearly, Google doesn’t want to be in the position where it’s the one that’s a click away. That’s because it knows that the vast majority of people – usually 95% or so, for any setting – use the defaults.

The reality is that we are where we are: Google is the most-used search engine, it has the largest number and value of search advertisers, and crucially it is annexing other markets in verticals. This dominance/annexation nexus is exactly the point that Microsoft was at with Windows and Internet Explorer.

The difference, the FTC acknowledged, is in the “harm to consumers”. Antitrust, under the US Sherman act, rests on three legs: monopoly of a market; using that monopoly to annexe other markets; harm to consumers. In US v Microsoft, the “harm to consumers” was that by forcing inclusion of Internet Explorer,

“Microsoft foreclosed an opportunity for OEMs to make Windows PC systems less confusing and more user-friendly, as consumers desired” and “by pressuring Intel to drop the development of platform-level NSP software, and otherwise to cut back on its software development efforts, Microsoft deprived consumers of software innovation that they very well may have found valuable, had the innovation been allowed to reach the marketplace. None of these actions had pro-competitive justifications”; furthermore, in the final line of the judgement, Thomas Penfield Jackson says “The ultimate result is that some innovations that would truly benefit consumers never occur for the sole reason that they do not coincide with Microsoft’s self-interest.”

In the case of the FTC and Google, the harm to consumers is less clear-cut; in fact, that’s part of why the FTC held off. Yet it’s hard to look at the tactics that Google used – grabbing other companies’ content, demoting vertical rivals in search, promoting its own verticals even though they’re worse – and not see the same restriction of innovation going on. Might Shopzilla have turned into a rival to Amazon? Could Yelp have built its own map service? Or become something else? History is full of companies which have sort-of-accidentally “pivoted” into something remarkable: Microsoft with MS-DOS for IBM (a contract it got because the company IBM first contacted didn’t respond); Instagram into photos (it was going to be a rival to Foursquare).

What’s most remarkable about the demotion of rivals is that users actually preferred the rivals to be ranked higher according to Google’s own tests.

Footnote 154: the smoking gun

In footnote 154 (on page 132 of the report, but referring to page 29 of the body – which is sadly missing), the FTC describes what happened in 2006-7, when Google was essentially trying to push “vertical search” sites off the front page of results. Google would test big changes to its algorithms on “raters” – ordinary people who were asked to judge how much better a set of SERPs were, according to criteria given them by Google. I’m quoting at length from the footnote:

Initially, Google compiled a list of target comparison shopping sites and demoted them from the top 10 web results, but users preferred comparison shopping sites to the merchant sites that were often boosted by the demotion. (Internal email quote: “We had moderate losses [in raters’ rating – CA] when we promoted an etailer page which listed a single product because the raters thought this was worse than a bizrate or nextag page which listed several similar products. Etailer pagers which listed multiple products fared better but were still not considered better than the meta-shopping pages like bizrate or nextag”).

Google then tried an algorithm that would demote the CSEs [comparison shopping etailer], but not below sites of a certain relevance. Again, the experiment failed, because users liked the quality of the CSE sites. (Internal email quote: “The bizrate/nextag/epinions pages are decently good results, They are usually formatted, rarely broken, load quickly and usually on-topic. Raters tend to like them. I make this point because the replacement pages that we promoted are occasionally off-topic or dead links. Another positive aspect of the meta-shopping pages is that they usually give a variety of choices… The single retailer pagers tend to be single product pages, For a more general query, raters like the variety of choices the meta-shopping site seems to give.”)

Google tried another experiment which kept a CSE within the top five results if it was already there, but demoted others “aggressively”. This too resulted in slightly negative results.

Unable to get positive reviews from raters when Google demoted comparison shopping sites, Google changed the raters’ criteria [my emphasis – CA] to try to get positive results.

Previously, raters judged new algorithms by looking at search results before and after he change “side by side” (SxS), and rated which search results was more relevant in each position. After the first set of results, Google asked the users to instead focus on the diversity and utility of the whole set of results, rather than result by result, telling users explicitly that “if two results on the same side have very similar content then having those two results may not be more valuable than just having one,” When Google tried the new rating criteria with an algorithm which demoted CSEs such that sometimes no CSEs remained in the top 10, the test again came back “solidly negative”.

Google again changed changed its algorithm to demote CSEs only if more than two appeared in the top 10 results, and then, only demoting those beyond the top two. With this change, Google finally got a slightly positive rating it its “diversity test” from its raters. Google finally launched this algorithm change in June 2007.

Here’s the point to hold on to: users preferred having the comparison sites on the first page. But Google was trying to push them off because, as page 28 of the report explains,

“While Google embarked on a multi-year strategy of developing and showcasing its own vertical properties, Google simultaneously adopted a strategy of demoting, or refusing to display, links to certain vertical websites in highly commercial categories. According to Google, the company has targeted for demotion vertical websites that have ‘little or no original content’ or that contains ‘duplicative’ content.”

On that basis, wouldn’t Google have to demote its own verticals? There’s nothing original there. But Google also decided that comparison sites were “undesirable to users” – despite all the evidence that it kept getting from its raters – while at the same time deciding that its own verticals, which sometimes held worse results, were desirable to users.

Clearly, Google doesn’t necessarily pursue what users perceive to be the best results. It’s quite happy to abandon that in the pursuit of what’s perceived as best for Google.

Fair fight?

Now, that’s fair enough – up to a point. Google can mess around with its SERPs but only until it uses its search monopoly to annex other markets to the disbenefit of consumers. It’s easy to argue that in preventing rival verticals getting visibility, it reduced the options open to consumers. What’s much harder is proving harm. That’s where the FTC stalled.

But in Europe, that last part isn’t a block. Monopoly power together with annexation is enough to get you hauled before the European Commission’s DGCOMP (directorate-general of competition). The FTC and EC coordinated closely on their investigations, to the extent of swapping papers and evidence. So the EC DGCOMP has full copies of both the FTC reports. (If only they would leak..)

There’s been plenty of complaining that the EC’s pursuit of Google is just petty nationalism. People – well, Americans – point to the experiment where papers prevented Google News linking to them. Their traffic collapsed. They came back to Google News. Traffic recovered. Sure, this shows that Google is essential; cue Americans crowing about how stupid the newspapers were.

However, if you stop to think about the meaning of the word “monopoly”, that’s not necessarily a good thing for Google to have demonstrated – even unwittingly – in Europe. Now the publishers, who have what could generously be called a love-hate relationship with Google, can show yet another piece of evidence to DGCOMP about the company’s dominant position.

What happens next?

Vestager will issue a Statement of Objections (which, sadly, won’t be public) some time in the next few weeks; that will go to Google, which will redact the commercially confidential bits, then send it back to Vestager, who will show it to complainants (of whom there are quite a few), who will comment and then give it back to Vestager.

Then the hard work starts. Whether Google seeks to settle will depend on what Vestager is demanding. Will she try to forestall Google from foreclosing emerging spaces – the future verticals we don’t know about? Or just try to change how it treats existing verticals? (Ideally, she’d do both.) Many of the issues around scraping and portability of advertising which Almunia enumerated in May 2012 have been settled already (now that Google has wrapped them up; the scraped datasets aren’t coming out of its data roach motel).

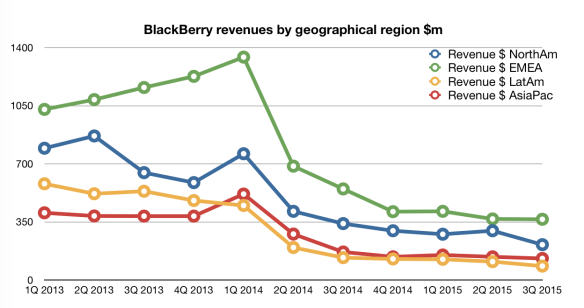

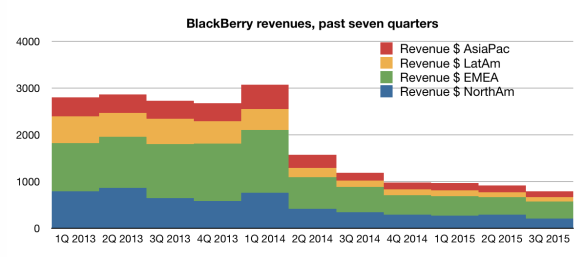

Neither is going to make all the revenue lost to Google favouring its own services come back. And as with record labels and YouTube, it’s likely that Google will try to stretch this out for as long as possible; the more it does, the more money it gets, and the less leverage its rivals have.

Even so, I can’t help thinking that rather as with Microsoft and Internet Explorer, the chance to act decisively has long been missed. Instead, a different phenomenon is pushing Google’s dominance on the desktop aside: mobile. Mobile ads are cheaper, see fewer clicks, and search is used less compared to apps. I’d love to see a breakdown of Google’s income from mobile between app sales and search ad sales (and YouTube ad sales): I wonder if apps might be the bigger revenue generator. Yelp, meanwhile, seems to do OK in the new world of mobile. It’s possible – maybe even likely – that Google’s dominance of the desktop will be, like Microsoft, broken not by the actions of legislators but by the broader change in technologies.

Right and wrong lessons

But the wrong lesson to take from that would be “legislators shouldn’t do anything”. Because there’s always the potential for inaction to corner a market and foreclose on real innovation. Big companies which become dominant need to worry that legislators will come after them, because even that consideration makes them play more fairly.

And that’s why the Google tussle with the FTC and EC matters. It might not make any difference to those that feel wronged by Google on the desktop. But it could forestall whoever comes next, and it will focus the minds of the legislators and the would-be rivals. Google might not have any friends. There might come a time when it will wish it had some, though.