Rachel Maddow: a challenge for AI categorisation systems. CC-licensed photo by West Point-The U.S. Military Academy on Flickr.

A selection of 11 links for you. Not paralysed by legislative dithering. I’m @charlesarthur on Twitter. Observations and links welcome.

AI thinks Rachel Maddow is a man (and this is a problem for all of us) • Medium

»

As more machine learning systems get used in production, it is increasingly important to adopt better testing beyond the test dataset. Unlike traditional software quality assurance, in which systems are tested to ensure that features operate as expected, machine learning testing requires the curation and generation of new datasets and a framework capable of dealing with confidence levels rather than the traditional 404 and 500 error codes from web servers.

My partner Alex and I have been working on tools for to support machine learning in production. As she wrote in The Rise of the Model Servers, as machine learning moves from the lab into production, additional security and testing services are required to fully complete the stack. One of our tools, ML Safety Server, allows the rapid generation and management of additional test datasets and the tracking of how these datasets perform over time. It is from using the Safety Server that we discovered that AI thinks Rachel Maddow is a man.

We’ve been using public cloud APIs to prototype the Safety Server. We discovered the Rachel Maddow issue when testing image recognition services. AWS, Azure, Clarifai, and Watson have all misgendered Rachel Maddow when given recent images of her.

«

So basically he’s saying that with great computing power comes great responsibility to make sure that the training and test sets are really, really good.

link to this extract

Encryption efforts in Colorado challenge crime reporters, transparency • Columbia Journalism Review

»

Colorado journalists on the crime beat are increasingly in the dark. More than two-dozen law enforcement agencies statewide have encrypted all of their radio communications, not just those related to surveillance or a special or sensitive operation. That means journalists and others can’t listen in using a scanner or smartphone app to learn about routine police calls.

Law enforcement officials say that’s basically the point. Scanner technology has become more accessible through smartphone apps, and encryption has become easier and less expensive. Officials say that encrypting all radio communications is good for police safety and effectiveness, because suspects sometimes use scanners to evade or target officers, and good for the privacy of crime victims, whose personal information and location can go out over the radio.

They also cite misinformation as a reason to encrypt. Kevin Klein, the director of the Colorado Division of Homeland Security and Emergency Management, said people listening to scanner traffic during a 2015 Colorado Springs shooting live-tweeted the incident and, in doing so, spread false information about the shooter’s identity and the police response.

But encrypting all radio communications makes it harder to cover crime. Journalists usually don’t use scanner traffic directly in their reports, but they often use the traffic to learn about and respond immediately to breaking news. In that sense, expanding encryption reduces transparency.

«

I’m pretty sure that in the UK police communications have been secure for years – you can’t tap into them. These days, Twitter and Facebook are how people find out about stuff.

link to this extract

Faking it: how selfie dysmorphia is driving people to seek surgery • The Guardian

»

Sometimes her followers would suggest meeting in person. “Then it would be like, ‘I have to look like my selfie.’” It was around this time, the height of her Snapchat obsession, that Anika started contacting cosmetic doctors on Instagram.

The phenomenon of people requesting procedures to resemble their digital image has been referred to – sometimes flippantly, sometimes as a harbinger of end times – as “Snapchat dysmorphia”. The term was coined by the cosmetic doctor Tijion Esho, founder of the Esho clinics in London and Newcastle. He had noticed that where patients had once brought in pictures of celebrities with their ideal nose or jaw, they were now pointing to photos of themselves.

While some used their selfies – typically edited with Snapchat or the airbrushing app Facetune – as a guide, others would say, “‘I want to actually look like this’, with the large eyes and the pixel-perfect skin,” says Esho. “And that’s an unrealistic, unattainable thing.”

A recent report in the US medical journal JAMA Facial Plastic Surgery suggested that filtered images’ “blurring the line of reality and fantasy” could be triggering body dysmorphic disorder (BDD), a mental health condition where people become fixated on imagined defects in their appearance.

«

Daily Mail demands browser warning U-turn • BBC News

»

The Daily Mail is calling for a web browser alert that criticises its journalism to be changed.

The NewsGuard plug-in currently brings up a warning that says the newspaper’s website “generally fails to maintain basic standards of accuracy and accountability”.It has given this advice since August. But the matter came to prominence last week, after Microsoft updated its Edge browser app for Android and iOS devices and built in NewsGuard.

This prompted several other media outlets to report the story. “We have only very recently become aware of the NewsGuard start-up and are in discussions with them to have this egregiously erroneous classification resolved as soon as possible,” said a spokesman for Mail Online.

At present, NewsGuard must be switched on by users of Microsoft’s Edge app, but the BBC understands there are plans for it to become the default option in the future.

The New York-based service – which is independent of Microsoft – also has ambitions to include its tool in further products from the Windows developer as well as other tech firms. But for now, it can be used as an add-on extension in the desktop version of web browsers including Edge, Google’s Chrome, Mozilla’s Firefox and Apple’s Safari.

«

The Newsguard warning for the Mail says “Proceed with caution: this website generally fails to maintain basic standards of accuracy and accountability.” I’d suggest it’s wrong about the accuracy – the Mail may be biased, but it’s fiercely proud of its fact-finding. (Quite how it *arranges* those facts can be up for debate.) The accountability when it gets stuff wrong is a lot worse though. It hates to admit that it screwed up.

link to this extract

Data broker that sold phone locations used by bounty hunters lobbied FCC to scrap user consent • Motherboard

»

Earlier this month Motherboard showed how T-Mobile, AT&T, and Sprint were selling cell phone users’ location data that ultimately ended up in the hands of bounty hunters and people unauthorized to handle it. That data trickled down from the telecommunications giants through a complex network of middlemen and data brokers. One of those third parties was Zumigo, a company that gets location data access directly from the telcos and then sells it for a profit.

Motherboard has now unearthed a presentation that Zumigo gave to the Federal Communications Commission (FCC) in late 2017 in which it asked the agency to place even fewer restrictions on how some of the data it sells can be used, and specifically asked for the agency to loosen user consent requirements for data sharing.

“As breaches become more prevalent and as consumers rely more on mobile phones, there is a tipping point where financial and personal protections begin to equal, or outweigh, privacy concerns,” one of the slides reads.

Another slide titled “solutions” suggests that the FCC loosen current consent requirements that are included in cell phone providers’ terms of service, allowing carriers to use vaguer, “more flexible” language.

«

Wouldn’t it be great if the US had some sort of laws around this stuff?

link to this extract

A simple camera and an algorithm let you see around corners • Scientific American

»

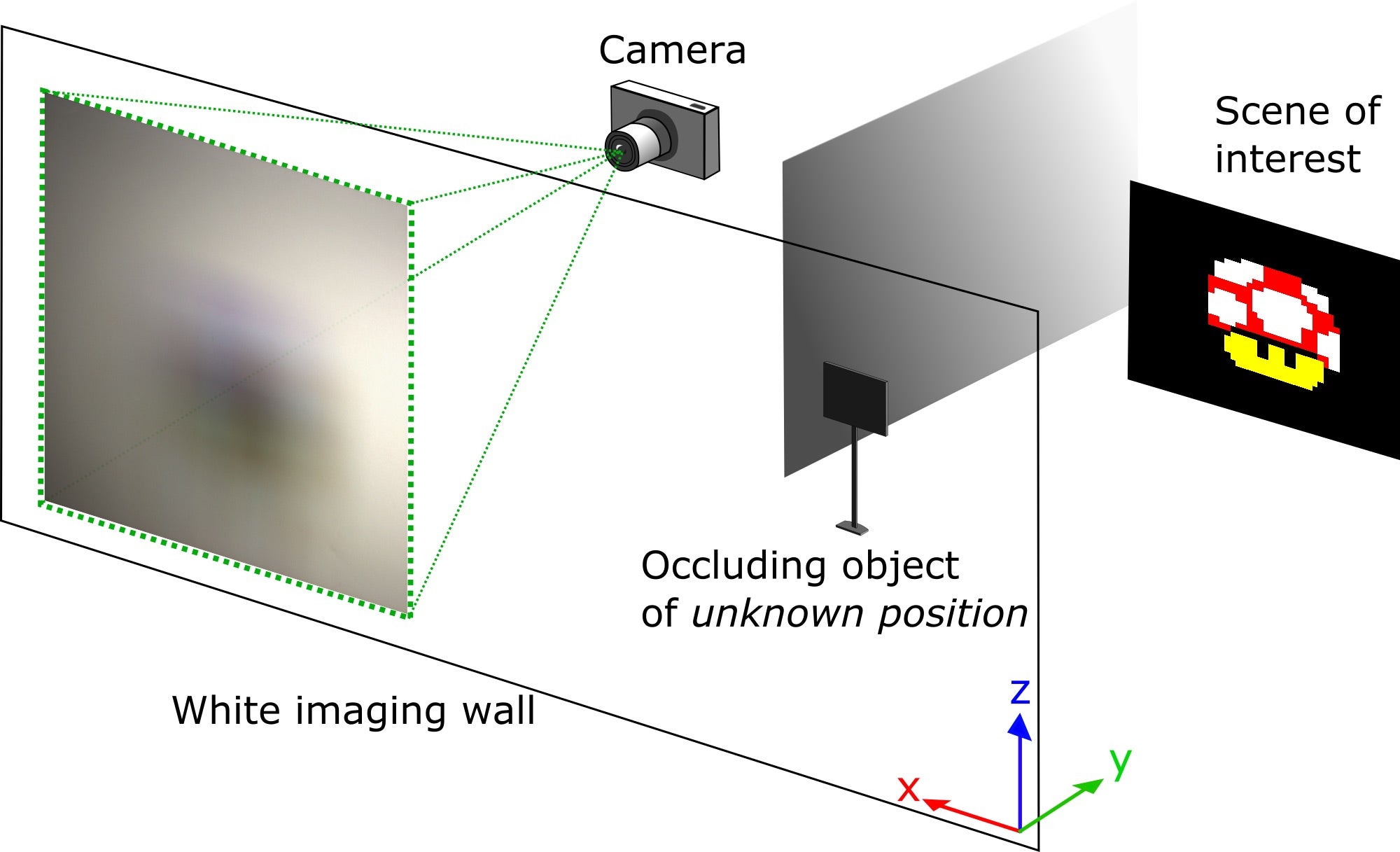

electrical and computer engineer Vivek Goyal and colleagues at Boston University analyzed the problem of looking around a corner by considering light as rays that follow straight lines between surfaces, an approach used in designing optics. They trace the path of light rays coming from an object on one side of a wall that goes around a corner by bouncing off a matte surface and entering a camera on the other side of the wall. In that simple arrangement the camera only sees the matte surface because it scatters the light uniformly.

However, they found that putting a flat opaque “occluder” between the hidden object—an illuminated screen displaying images—and the matte surface changes the picture. The occluder casts shadows that block light from parts of the display screen from reaching parts of the matte surface. The effect is similar to a partial lunar eclipse, where Earth blocks sunlight from reaching parts of the moon.

An LCD monitor displayed the scene. All it needed was a laptop. Source: Nature.By tracing light rays from the edges of the shadows, Goyal’s team could map what parts of the screen would illuminate what parts of the matte surface. Then they created algorithms that worked backward from images of the matte surface recorded by the digital camera to re-create the pattern shown on the screen.

«

This is totally Blade Runner, isn’t it?

Even with the Google/Fossil deal, Wear OS is doomed • Ars Technica

»

the S3 chip in the Apple Watch Series 3 was claimed to be 70% faster than the S2 SoC. The S4 SoC in this year’s Apple Watch Series 4 is claimed to be two times faster than the S3, and it’s a modern ARM design with 64-bit compatibility.

Wear OS has never once seen the kind of performance increase that the Apple Watch enjoys every single year. If you read Qualcomm’s press releases carefully (2100 launch, 3100 launch), you’ll notice the company never even claims its new smartwatch chip is faster than its old smartwatch chip. We’ve verified this with benchmarks, too. It’s just the same ancient CPU being repackaged over and over.

When it comes to hardware, Google relies on an ecosystem of component vendors to produce a good product. This works fine in established markets like smartphones, but it makes it very hard for a company to break into new form factors that the component vendors aren’t already heavily invested in. Non-Apple smartwatches are not a thriving market, and component vendors would have to take a big risk to develop quality components for a market that doesn’t exist yet. Qualcomm has clearly decided it’s not willing to take that risk.

Wear OS is what happens when a hardware ecosystem collapses. You can build the best hardware and software on Earth, but if it’s all running on a hundred-year-old SoC that is hot, slow, big, and has terrible battery life, you aren’t going to end up with a good product.

«

Amadeo is the guy who does the deep dives into Android OS, and who used to work for the Android Police site.

link to this extract

Feds also say that Oracle underpaid women and minorities • WIRED

»

Oracle allegedly underpaid thousands of women and minority employees by $401m over four years, according to a document filed Tuesday by the US Department of Labor, as part of an ongoing discrimination lawsuit against the software giant.

In the document, the Labor Department also claims that Oracle strongly prefers hiring Asians with student visas for certain roles because they are “dependent upon Oracle for sponsorship in order to remain in the United States,” so the company can systematically underpay them. Between 2013 and 2016, the department says, 90% of the 500 engineers hired through its college-recruiting program for product development jobs at its headquarters in California were Asian. Over the same four years, only six were black.

Once they are employed, Oracle also systematically underpays women, blacks, and Asians relative to their peers, the complaint claims, alleging that these disparities are driven by Oracle’s reliance on prior salaries in setting starting salaries and the company’s practice of steering black, Asian, and female employees into lower paid jobs. The department says some women were underpaid by as much as 20% compared with their male peers, or $37,000 in 2016.

“Oracle’s suppression of pay for its non-White, non-male employees is so extreme that it persists and gets worse over long careers; female, Black, and Asian employees with years of experience are paid as much as 25% less than their peers,” according to an updated complaint filed Tuesday.

«

There’s also a private lawsuit to the same effect. Twenty years ago I knew a woman who received a substantial payout from Oracle in the UK for sexual discrimination. I guess some things are ingrained.

link to this extract

Xiaomi’s folding phone is the best we’ve seen so far • The Verge

»

Xiaomi’s folding phone has been revealed in a teaser video from the company. Xiaomi co-founder and president Lin Bin has posted a nearly minute-long video to Weibo today, detailing the double folding phone. Both sides of the device can be folded backwards to transform it from a tablet form factor into more of a compact phone. Unlike other foldable phones we’ve seen recently, this certainly looks a more practical use for the technology.

Xiaomi doesn’t provide many details about its foldable phone, but Bin reveals the device in the video is simply an engineering model. Bin does note Xiaomi has conquered “a series of technical problems such as flexible folding screen technology, four-wheel drive folding shaft technology, flexible cover technology, and MIUI adaptation.” Xiaomi appears to have adapted its MIUI software for the foldable phone, and a video is seen playing on the device before it converts from tablet to phone mode.

Xiaomi’s folding phone leaked earlier this month, and it’s set to compete against devices like Samsung’s folding phone prototype and Chinese company Royole’s folding device. Huawei is also reportedly planning to launch a foldable device, and Lenovo has previously teased that it was working on bendable phones.

«

I get the feeling that foldable phones are going to be huge in China for commuters. I’d give Xiaomi and Huawei a good chance on this (and it could revive Samsung’s fortunes, briefly). I don’t see Lenovo making it happen – or at least not profitably.

link to this extract

Trump offered NASA unlimited funding to go to Mars by 2020 • NY Mag

Olivia Nuzzi fillets a bit from a new book by Cliff Sims, who worked in the White House and was trying to get Trump onto a call with the International Space Station:

»

As the clock ticked down, Trump “suddenly turned toward the NASA administrator.” He asked: “What’s our plan for Mars?”

[Acting Nasa administrator Robert] Lightfoot explained to the president — who, again, had recently signed a bill containing a plan for Mars — that NASA planned to send a rover to Mars in 2020 and, by the 2030s, would attempt a manned spaceflight.

“Trump bristled,” according to Sims. He asked, “But is there any way we could do it by the end of my first term?”

Sims described the uncomfortable exchange that followed the question, with Lightfoot shifting and placing his hand on his chin, hesitating politely and attempting to let Trump down easily, emphasizing the logistical challenges involving “distance, fuel capacity, etc. Also the fact that we hadn’t landed an American anywhere remotely close to Mars ever.”

Sims himself was “getting antsy” by this point. With a number of points left to go over with the president, “all I could think about was that we had to be on camera in three minutes … And yet we’re in here casually chatting about shaving a full decade off NASA’s timetable for sending a manned flight to Mars. And seemingly out of nowhere.”

Trump did not seem worried about the time. Sims wrote that he leaned in toward Lightfoot and made him an offer. “But what if I gave you all the money you could ever need to do it?” Trump asked. “What if we sent NASA’s budget through the roof, but focused entirely on that instead of whatever else you’re doing now. Could it work then?”

Lightfoot told him he was sorry, but he didn’t think it was possible. This left Trump “visibly disappointed,” Sims wrote. “But I tried to refocus him on the task at hand. We were now about 90 seconds from going live.”

Trump wasn’t ready to refocus yet, however. As he walked with Sims from the dining room to the Oval Office, he stopped just outside the door. “He decided to stop in his white-marbled bathroom for one final check in the mirror,” Sims wrote. He had 30 seconds before he was supposed to be on camera, and Sims was “now nearing full on panic.”

In the bathroom mirror, Trump smirked and said to himself, “Space Station, this is your President.”

«

About us • AlgoTransparency

»

How did you identify YouTube’s most often recommended videos?

We used a multi-step program to analyze videos recommended by its algorithm in response to searches for the names of the different candidates.

Step 1: Using an account with no viewing history, the program searches for a given candidate’s name on YouTube.

Step 2: We collect the top six results.

Step 3: For each result, we follow the three first recommendations (videos in the “Up next” list).

Step 4: For each recommendation, we get the top 3 recommendations. We repeat this 6 different times.The program tabulates how many times each video is recommended, which is then used to determine which videos YouTube most often recommends for any given candidate.

«

It’s dismaying how awful the results are. From any reasonable query, you’re quite quickly led down a rabbithole of nonsense.

link to this extract

Errata, corrigenda and ai no corrida: Concerned about the security of your Nest account after yesterday’s story? You can get two-factor authentication for it. SMS-only at present, but better than just your password, right? (Thanks Richard G for the link.)

You can sign up to receive each day’s Start Up post by email. You’ll need to click a confirmation link, so no spam.

Re. Wear OS. Ron Amadeo is weird. He is an outstanding OS/software reviewer, and a dismal device reviewer. He’s been getting very strong pushback from the get-go about his presenting his personal opinions as universal truths, mostly about random/irrelevant stuff: the order of Android’s navigation buttons, bezels, materials… and has not taken the feedback into account at all – he seems to be THE Ars writer that never ventures into comments. And he seems to be getting worse, one of his latest pieces is actually titled “Nokia 8.1 Plus is the company’s most modern-looking phone yet”, as if readers went to Ars for pronouncements on a phone’s… looks ? I’d take anything he says with a trowel of salt.

His thesis on WearOS is that the CPUs are too slow and the economics preclude designing faster ones.. I’m unclear how this matters: Qualcomm’s 2100 and 3100 have 5-years-ago-flagship performance, that’s plenty to create and move pixels on a 400×400 screen. Users in the comments seem OK with the performance.

My thesis is that smartwatches are a failure of segmentation, we need different products for health, fitness and social. Also, make them look not like wrist-attached dreadnoughts. I think Mobvoi is on the right track: https://www.mobvoi.com/uk/pages/ticwatchc2 . Tellingly though, Xiaomi has no WearOS device

The return of the revenge of the spork, II: https://www.laboutiquedegeorgette.com/14-georgette-aventure

That one is… much better I guess, because it is also a knife and a little spoon. Yeah !

Blast from the past: Backblaze’s HDD reliability figures for 20188 are out: https://www.guru3d.com/news-story/2018-hdd-failure-rates-report-from-backblaze.html

Interesting as much for what they say (high-capacity drives don’t fail more than low-capacity drives, at least until 14TB), as for what they don’t: only one Western Digital drive, and it’s the worst of the mainstream drives. Matches my experience 😦

Hardware.fr used to have a very interesting return rate yearly recap, but the guy kind of wandered off. https://www.hardware.fr/articles/962-2/cartes-meres.html

Is anyone taking bets that Trump will sneak into the House and do his state of the union anyway ?

This is intriguing: https://www.gsmarena.com/xiaomi_launches_sharesave_in_india_a_platform_for_buying_products_from_china-news-35225.php , I’m assuming it’ll be tweaked and world-wide’d eventually.

Aside from excellent phones in both the value and high-end segments, Xiaomi has a lot more products than even Huawei in the PC, IoT, fashion, electronics… markets, and archival BBK has essentially none. Xiaomi also has name-brand shops (quite a few in China and India, a smattering in the EU), and now seems to be launching its own e-tailer with a social twist and a large catalog.

What’s amazing is that they seem to be succeeding in creating a brand image (Chinese cheap but with a brand’s design, quality and service) and a whole ecosystem, when I really thought the openness of Android made such attempts futile; and at the same time they’re creating their own distribution. I’m amazed they haven’t had a bad misstep juggling so many balls (though that “48 megapixels” camera on the Note 7 is iffy 😦 ).

It’s fascinating watching a conglomerate being born. Who knew things weren’t fatally ossified yet ? How asleep at the wheel must the incumbents (LG, Sony, even Samsung and Lenovo) be ?